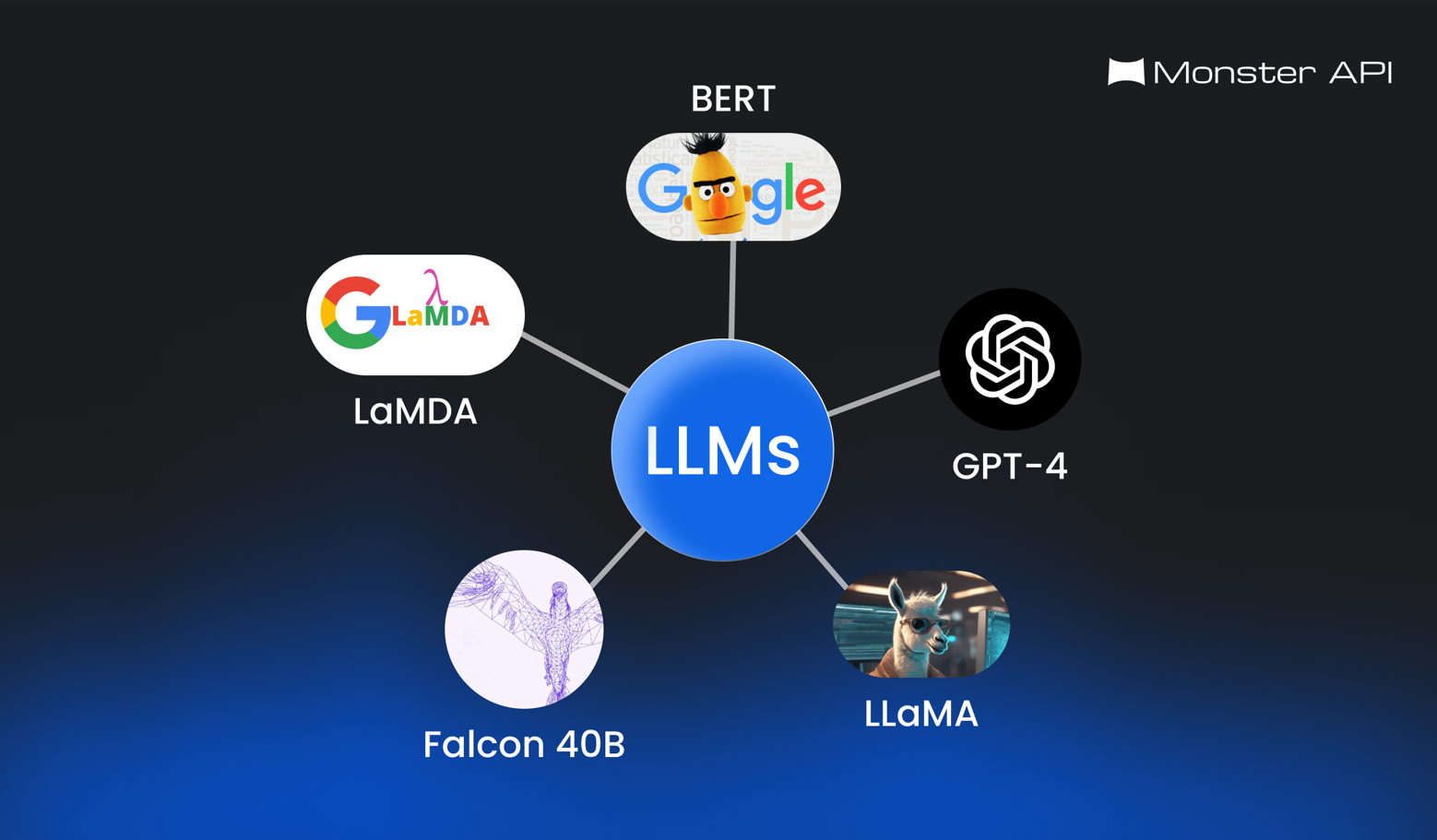

Introduction to LLMs - What Are LLMs, How Do LLMs Work?

Overview of Large Language Models

Large language models (LLMs) have revolutionized how AI models work. Large Language Models are similar to human intelligence. They are used for several things, including academia, tech, entertainment, and the research community.

LLMs are trained on extensive data sets, they utilize intricate architectures like stack or encoder-decoder structures. They have layers of thousands or millions of neurons performing computations through operations such as convolution and attention mechanisms.

It is possible to enhance the capabilities of a LLM mode. This is done by using advanced statistical techniques like reinforcement and transfer learning. This allows them to handle diverse inputs, including comprehension, synthesis, translation, question answering, and image & text generation.

How do LLMs Work?

Large language models (LLMs) are like superpowered language learners. They’re trained on massive amounts of text data to understand and generate human language. Here's a simplified breakdown of how they work:

- Training on a massive scale: Imagine an LLM as a student and the data it's trained on as its textbook. These textbooks can be anything, books, articles, code, and even web pages. Any type of text information can be leveraged to train LLMs.

- Deep learning: LLMs use a specific type of machine learning called deep learning. Deep learning involves complex algorithms inspired by the human brain, called neural networks. These networks analyze the data, identifying patterns and connections between words and phrases.

- Predicting the next word: During training, the LLM is constantly challenged to predict the next word in a sequence. For example, given the sentence "The cat is on the...", the LLM might predict "mat". As it processes more data and encounters different contexts, it refines its understanding of language.

- More than memorizing: While the process involves a lot of data, LLMs aren't simply memorizing everything. Instead, they learn the underlying rules and relationships within language, allowing them to adapt to new situations and generate different creative text formats, like poems or code.

- Fine-tuning for specific tasks: Sometimes, LLMs are "fine-tuned" for specific tasks. This involves additional training focused on a particular goal, like writing different kinds of creative content or translating languages. Solutions like MonsterAPI LLM finetuner, simplify the complex fine-tuning process.

Training and Fine-Tuning Techniques

LLM training involves two primary steps - initialization and iterative improvement through backpropagation gradient descent. Initial weights are set randomly, with subsequent adjustments aiming to minimize error functions corresponding to the given objective function.

Pretraining techniques, such as masked language modeling, expose the model to various languages. Other strategies include Causal Language Modeling (CLM), autoencoders, and denoising.

Fine Tuning follows initialization. Fine-tuning the model to solve a domain-specific problem instead of general language comprehension.

Hybrid approaches, combining unsupervised and supervised learning, can enhance a model’s capabilities. Domain adaptation, a subset of transfer learning, tailors generic LLMs to unique problem sets via fine-tuned hyperparameters.

Additionally, meta-learning strategies, such as automated algorithm configuration and tuning via reinforcement learning, offer promise. Meta-learners adaptively select settings for optimizer choices, learning rates, batch sizes, and dropout factors, minimizing experimentation overhead and increasing efficiency and reliability.

There are a number of challenges in Fine-tuning LLMs. The primary challenge is the limited availability of computational resources like GPUs or TPUs. This lack of resources can hinder the process.

MonsterAPI makes Finetuning LLMs super easy by offering:

- Simplified Setup: Monster API simplifies setting up GPU environments for FineTuning ML models with an intuitive interface, eliminating manual hardware specifications, software installations, CUDA toolkit setups, and other low-level configurations.

- Memory Optimization: Addressing the challenge of GPU memory constraints, Monster API optimizes memory utilization during fine tuning, ensuring efficient processing within available GPU memory, thus making it more accessible and manageable for developers.

- Cost Reduction: By leveraging a decentralized GPU network, Monster API offers on-demand access to ultra-low-cost GPU instances, significantly reducing GPU costs associated with FineTuning ML models.

- Standardized Practices: With predefined tasks and customizable options, Monster API streamlines the FineTuning process, guiding users through best practices and eliminating the need to navigate complex documentation and forums, thereby saving time and frustration.

Check out the MonsterAPI no-code LLM FineTuning solution.

Practical Applications of Large Language Models (LLMs)

Large Language Models (LLMs) have become a common part of today’s life. Almost everyday, LLMs are transforming various aspects of human interaction and business operations:

Some Use Cases of LLMs in Everyday Life:

- Enhancing Digital Communication: LLMs power virtual assistants and chatbots to offer a personalized experience in real-time conversations. Most chatbots that you see on websites today are powered by LLMs.

- Content Creation Simplification: LLMs streamline content creation by generating articles, social media posts, and marketing copy which used to take days.

- Improved Language Translation: LLMs help in breaking the language barrier by allowing users to instantly translate multiple languages.

- Coding Assistance: One of the biggest use cases of LLMs is that it helps in writing code for websites, apps, and other digital products within minutes. So, someone who has no knowledge of coding can develop a website or an app.

Some Use Cases of LLMs Across Industries:

- Improving Finance Industry: LLMs have paved their way into the financial industry. LLMs analyze financial data to help users make better investment decisions, make risk assessments based on previous data, and prevent financial fraud.

- Enhance Shopping Experiences: LLMs can be used to personalize shopping experiences by recommending products and optimizing pricing strategies.

- Take Education To The Next Level: LLMs support personalized learning experiences by providing educational content. Anyone can learn about almost anything with the help of LLMs.

- Media and Entertainment: LLMs can generate content ideas, and recommendations, and streamline content production processes. LLMs like text to image generator and text to video generator (OpenAI’s SORA) can help fuel creativity.

As LLMs continue to evolve, their impact on everyday life and various industries is expected to grow, revolutionizing human-machine interaction and business operations.

Evaluation Metrics

Measuring the effectiveness of large language models (LLMs) involves using quantifiable indices relative to benchmarks like human-generated responses or expert-derived ground truth solutions. Standard evaluative measures include BLEU scores, ROUGE metrics, F1-scores, exact match percentages, and METEOR values, aimed at assessing fluency and relevance. However, metric selection poses challenges, with alternatives prioritizing dimensions differently. Hence, choosing a suite of relevant indices aligned with the target scenario is crucial.

To compare multiple LLMs, multimetric evaluation systems have emerged, integrating various metrics into comprehensive scoring functions. Another trend involves adopting novel paradigms like "naturalness" and "informativeness," assessing response originality and relevance, respectively.

Conclusion

Large language models (LLMs) are trained on massive amounts of text data to understand and use language like humans.

This allows them to drive innovation and efficiency in various fields, from improving communication to boosting research and development. As LLMs continue to develop, they have the potential to completely transform how we interact with machines, work, and solve problems in the future.

Resource Section:

Sign up on MonsterAPI to get FREE credits and try out our no-code LLM FineTuning solution today!

Check out our documentation on: